No subject got more play at this year’s RSA Cyber Security Conference in 2025, where over 40,000 security and tech gurus gathered: Wielding AI is reshaping the entire world of security. Now, security authorities, from defenders to attackers can deploy machine intelligence at scale, from instantaneous detection to adaptive threat simulation. This convergence has activated a new kind of arms race: one based on algorithmic agility rather than human speed.

AI is speeding the pace of that trend for the attacks, slashing breakout times from hours to minutes. Attackers use generative and agentic AI to create polymorphic malware, automate social engineering, and engage in deepfake phishing, bypassing legacy filters. Defenders, meanwhile, are integrating AI into Endpoint Detection and Response (EDR), Extended Detection and Response (XDR), and security operations centers (SOCs) that sift through anomalies and predict vulnerabilities before hackers do. The result is a security environment that evolves just as quickly as the model that pushes it.

Source: https://onlinedegrees.sandiego.edu/cyber-security-specialist-career-guide/

For one, AI provides unmatched insight into network activity and can automate detection and triage at a scale few human teams ever could hope to replicate. The downside is that it expands the attack surface with threats like data poisoning, model hijacking and AI-generated disinformation. CISOs and IT leaders will have to walk a careful line between the two: harnessing AI but strengthening their systems against that dark side.

Opportunities: Anomaly Detection, Threat Intelligence, Auto-Response (and More)

Context-Aware Anomaly Detection

Anomaly detection is one of the first and most established AI applications within cybersecurity. Instead of searching for known signatures or predetermined correlation rules, such AI models establish a behavioral baseline for every user, endpoint, and process, then flag changes that might indicate potential compromise.

In practice, such systems are examining the millions of signals that come from network traffic identity systems and application logs. If, for example, it is discovered that an employee account is suddenly transferring data to a machine somewhere it has never transferred data to before, or being used with a significantly increased frequency of authentication attempts, an AI-based next-gen EDR or XDR platform can flag and quarantine the activity in a matter of seconds.

Source: https://www.manageengine.com/log-management/ueba/resources/detecting-anomalies-the-what-the-why-the-how.html

Advanced systems now include federated learning and graph-based analytics to correlate activity across disparate places. This enables organizations to discover lateral movement and insider threats that would otherwise have gone unnoticed. The upshot: faster detection cycles and lower dwell times, as well as better protection against zero-day exploits.

Predictive Threat Intelligence and Risk Anticipation

AI turns traditional reactive threat intelligence into a predictive capability. Machine-learning techniques digest and correlate a vast amount of data (threat feeds, open-source intelligence, dark-web chatter, and internal telemetry, among them) to capture early indicators of compromise.

Predictive systems can detect emerging exploit patterns, prioritize vulnerabilities most relevant to your tech stack, and forecast attack likelihood based on real-world adversary behavior. For CISOs, this means they can reassess how secure their security postures have changed as frequently as daily rather than quarterly.

Industrial platforms leverage natural language processing to interpret unstructured reports and map them to frameworks such as MITRE ATT&CK, models, and live security events. This intelligence cycle allows for SOC to eliminate the threat before an exploit hits operational severity.

Automated Incident Response and SOC Augmentation

Security Operation Centers (SOCs) are turning to AI for help in automating the manual triage and response process. These systems can process thousands of alerts in parallel, filter false positives, and escalate incidents that match known compromise patterns.

AI-driven orchestration engines can also recommend, and sometimes carry out, a response: isolating endpoints, resetting credentials, or rolling out containment rules. First-generation agentic AI goes further, chaining actions between systems autonomously, under policy and human oversight.

Source: https://www.elca.ch/news/how-transition-modern-security-operations-center-soc

The return consists of both lowered mean time to detect (MTTD) and mean time to respond (MTTR), as well as a quantifiable lift in analyst productivity. Instead of drowning in alert noise, teams focus on hunting, forensics, and defense improvements.

Additional Emerging Opportunities

Beyond the above three big pillars, there are several new AI-infused capabilities that are worth watching from a strategic standpoint:

- Deception and Active Defense: AI can be used to produce decoy systems, false credentials, honey-tokens, and dynamic traps adapted to adversary behavior. These are becoming increasingly flexible thanks to what they learn from the adversary’s tactics.

- Adaptive Micro-Segmentation and Zero-Trust Enforcement: AI algorithms can constantly monitor asset behavior and update the segmentation boundaries or trust scores as and when required. This adds an aspect of enforcing a “zero-trust” posture rather than segmenting statically.

- Supply-Chain Risk and Model Security: AI-driven tools can flag high-risk vendors, detect anomalous changes in vendor software or hardware, and assess the integrity of third-party components.

- Edge/IoT Security via Edge-AI: As the IoT/5G research indicates, hybrid and federated learning enables ‘closer to the asset’ detection, so reducing latency and reliance on central infrastructure. This is relevant in decentralized settings (OT, ICS, off-site installations).

- Explainable AI (XAI) in Cybersecurity Workflows: As SOC analysts and decision makers are responsible for trusting the AI outputs, frameworks that can provide interpretability, causality, and transparent reasoning are becoming popular. As an instance, the vision work on causal-graph anomaly detection.

AI’s value in cybersecurity is its adaptability. Companies that are integrating AI into the very core of their security architecture are getting the visibility and decision speed that older systems can’t match.

New Threats: AI-Generated Attacks and Deepfake Phishing

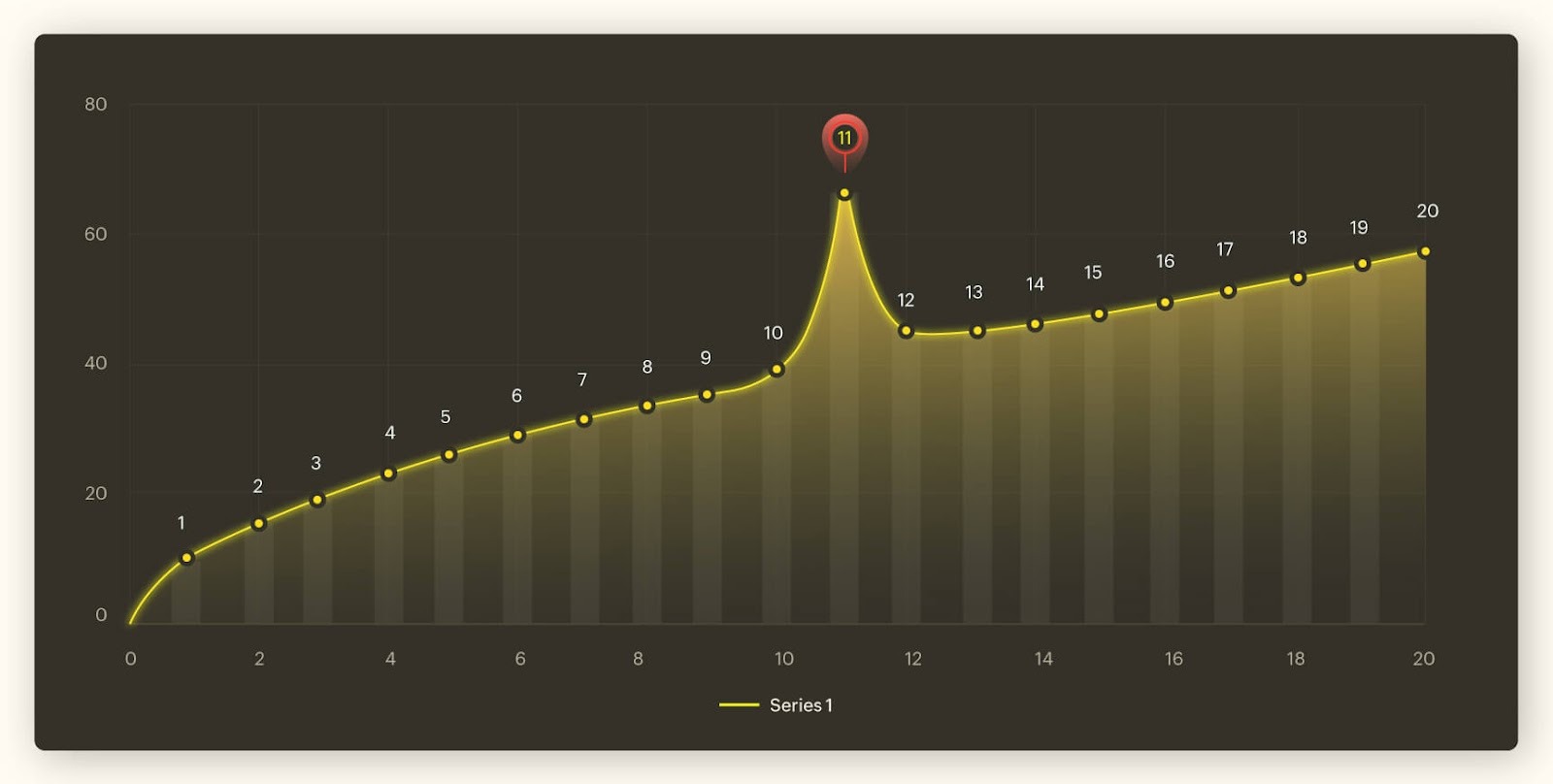

A cross-disciplinary review of more than 900 academic and industry papers indicates that 56% of AI-based cyberattacks occur during the access and penetration phase, and are triggered when AI is used to automate reconnaissance, vulnerability discovery, or privilege escalation. Another 24 percent take place during exploitation and command-and-control stages, suggesting that AI is increasingly being used by attackers to maintain a foothold inside networks.

Source: https://facephi.com/en/protect-your-company-from-deepfakes/

AI can automate functions that were once laborious processes, such as scanning IP ranges, identifying weak credentials, and producing polymorphic code, which changes each time it is deployed. During the reconnaissance phase, machine learning models analyze open-source intelligence and social media data to map organizations in detail, drawing pinpoint insights on potential insiders or weak endpoints. Again, with that access, reinforcement learning can double down on lateral movement, tweaking payloads back and forth on the fly to dodge endpoint protection systems.

Traditional rule-based defenses can’t keep up with this degree of automation and adaptability. Attack chains now adapt in real time, getting from compromise to detection in minutes instead of hours as AI systems feed off defenders' reactions.

Ransomware and AI-Augmented Exploitation

Recent research from MIT’s Cybersecurity and Safe Security labs examined 2,800 ransomware incidents and found that 80% involved AI components. These ranged from generative phishing and synthetic voice scams to fully automated code generation.

AI assists attackers in several ways:

- Malware generation: Generative models produce polymorphic malware—code that changes its structure or signature on each execution, defeating static detection.

- Password cracking: AI-powered brute-force models can test billions of combinations by predicting likely password patterns.

- CAPTCHA bypass and privilege escalation: Vision models and LLM-based interpreters can now defeat CAPTCHA challenges and exploit privilege misconfigurations without human direction.

- Adaptive ransomware: Reinforcement learning algorithms tune encryption and exfiltration behavior in real time to maximize damage before detection.

Source: https://legal.fronteo.com/en/legal-column/ransomware-infection-routes

The combination of scale and precision is what makes these threats existential. Attackers no longer need large teams or technical expertise. AI has democratized cybercrime, lowering the barrier to entry while multiplying its effectiveness.

Deepfake Phishing and Social Engineering 2.0

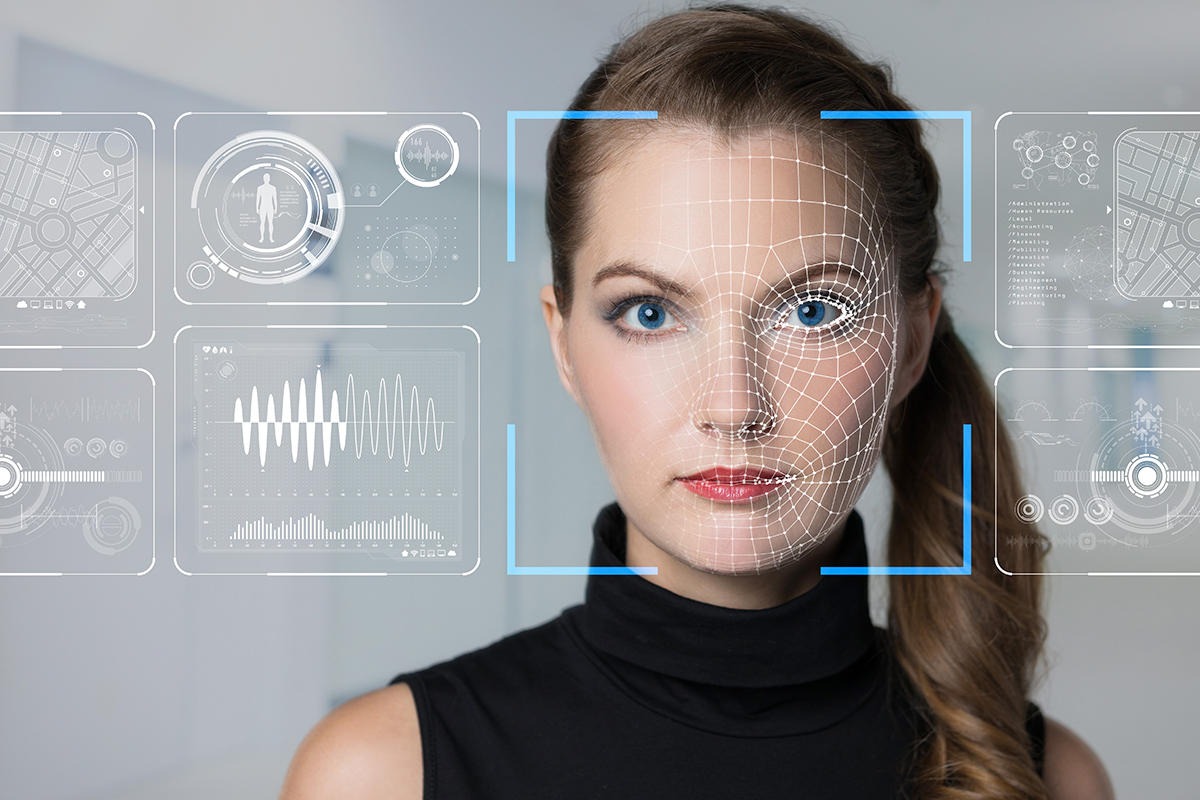

But the most egregious of these troubling developments may well be the rise of AI-powered social engineering. Deepfake audio and video applications can be used to convincingly impersonate executives, customer-service representatives, or vendors, to entice employees into sending money or revealing sensitive information.

Large language models generate personalized phishing emails with a similar tone, writing style, and organizational context, avoiding spam filters relying on keywords to catch and block. The campaigns could reach thousands of people simultaneously, with the tone of each message calibrated for believability.

The outcome is a new kind of identity-based deception, one in which attackers weaponize trust itself. With deepfakes and synthetic content only becoming better, enterprises need to consider voice, video, and text authenticity as part of the threat surface.

Why Current Defenses Are Insufficient

Existing defensive tools (SIEMs, firewalls, antivirus) were not built to counter adversaries that learn and adapt. According to the recent literature analysis, most organizations’ cyber defense infrastructure will become inadequate without AI augmentation.

Source: https://news.cnrs.fr/opinions/the-unforeseen-acceptance-of-deepfakes

Security executives should be prepared to assume that AI-generated attacks will intermingle into genuine traffic and react quicker than human analysts can keep up. The one enduring strategy is to embed AI in defensive layers that are learning, cross-correlating signals and predicting what the attackers will try next.

AI vs. AI: The Arms Race

AI on Offense

Offensive AI has fundamentally altered the economics of cyberattacks. Instead of relying on static malware or manual reconnaissance, threat actors now deploy generative models and reinforcement learning agents that continuously refine their tactics.

- Generative deception: Attackers use large language models to produce realistic phishing content, mimic executive communication styles, or craft multilingual lures that target specific departments or partners.

- Autonomous reconnaissance: ML agents trawl through open-source data, create a mapping of corporate structure, and relate leaked credentials to find out the most exploitable assets.

- Polymorphic payloads: Malware developed by AI modifies its code structure or encryption process for every iteration, bypassing any signature-based and heuristic detections.

- Reinforcement-driven persistence: Once inside a network, adaptive agents observe defensive responses, then modify command-and-control behavior, escalation paths, and exfiltration methods to stay undetected.

What AI really does is make cyber offense a self-educating environment. The attacks develop on their own, algorithmically aiming to thrive rather than simply win.

AI on Defense

Defensive AI is catching up quickly. Security vendors and companies are developing models that crunch billions of telemetry events per day, correlate anomalies across multiple domains, and even simulate potential breach activity.

- Predictive defense: With capabilities like those in Microsoft Security Copilot, Darktrace, and Palo Alto Cortex XSIAM, code dips into AI to predict what attackers will try by spotting subtle forerunners of compromise.

- Deceptive AI: Some defense platforms are creating synthetic environments that learn from the hacker as they go about their business by acting as adaptive honeypots, fake credentials, or decoy servers to attract and profile intruders in real time.

- Agentic SOC support: LLM-based assistants are learning how to help analysts in investigation, alert triage, and playbook execution by transforming natural language queries into actionable commands that expedite incident response.

- AI-driven red teaming: Security teams are increasingly turning to generative adversarial systems that simulate lifelike attacks on the organizations’ own infrastructure, shedding light on blind areas before malicious attackers can take advantage of them for real.

Where the attackers use AI as a weapon of chaos, the defenders are using it for context. The divide, then, is architectural: offense AI learns to disrupt systems; defense AI learns to understand them.

Examples of Solutions: Microsoft Security Copilot, Darktrace, and More

Microsoft Security Copilot

Security Copilot from Microsoft combines the reasoning of large language models with deep security telemetry from Microsoft 365 Defender and Sentinel. Serving as a smart assistant, they have the ability to aid your analysts in putting together incidents, stitching alerts, and making prospects in seconds.

Source: https://www.microsoft.com/en-us/security/blog/2023/12/06/microsoft-security-copilot-drives-new-product-integrations-at-microsoft-ignite-to-empower-security-and-it-teams/

Analysts are able to ask natural-language questions like “Show me devices communicating with the suspicious IP this week.” When confronted with a detection, Copilot pulls in relevant telemetry, maps the dependencies, and suggests mitigating actions. Its value is in context fusion and secure prompt isolation, integrating identity, endpoint, and cloud data, all while maintaining sensitive context within the enterprise.

By substituting manual correlation with automated synthesis, Copilot reduces investigation time and enables analysts to “shift left” from data triage to strategic containment.

Darktrace

Darktrace pioneered self-learning AI in cybersecurity, using unsupervised learning to continuously model what “normal” looks like. Rather than depending on pre-labeled data, it adapts automatically to environmental changes.

Source: https://cybersecurity-excellence-awards.com/candidates/darktrace-9/

And when there are any anomalies, Darktrace RESPOND will automatically quarantine the potential threat, such as restricting access or isolating infected accounts, until it can be confirmed by the SOC. This responds instantly and reversibly to the movement, with no dwell period time, helping keep analysts unburdened.

It also enhances email protection with the analysis of linguistic tone and sender behavior, including relationships between senders, to identify potentially harmful mail, preventing spear phishing and business email compromise even before it is detected by humans. It is not an all-or-nothing approach but instead one of the rare solutions able to manage insider threats and external attacks at a multiple-level visibility.

Palo Alto Cortex XSIAM

Palo Alto Networks’ Cortex XSIAM aggregates telemetry across endpoint, network, cloud and identity systems into a centralized AI data lake. It relies on predictive modeling and automation to identify and prioritize threats faster than typical SIEM workflows.

Source: https://voi.id/en/technology/341149

The system classifies alerts, aligns them to MITRE ATT&CK techniques, and executes automated responses through predefined playbooks. Its goal is twofold: to reduce Mean Time to Respond (MTTR) and to boost SOC throughput without adding headcount.

With large-scale machine reasoning, XSIAM turns siloed data into a unified view of organizational risk. This allows CISOs to quantify detection accuracy and operational efficiency.

SentinelOne Purple AI

SentinelOne Purple AI pairs natural-language querying with predictive telemetry correlation, providing human-machine collaboration on threat hunting. For example, analysts might ask, “Show me all PowerShell scripts with obfuscated commands on any endpoint” to receive structured and explainable results.”

Its natural language interface makes threat analysis democratic, giving junior analysts a way to participate in the process as increasing senior oversight. The Predictive Correlation Engine provides recommendations based on patterns and contextual guidance, turning the alert into direct remediation.

Business Strategy: AI-First Security

Traditional security stacks rely on point tools stitched together by human workflows. These boundaries dissolve in an AI-first environment. AI connects everything into a single feedback loop that learns from every incident.

To support that shift, organizations must:

- Build data-centric architectures that unify telemetry from endpoints, identity, and cloud environments.

- Invest in real-time data normalization and labeling, since poor data quality limits model accuracy.

- Introduce secure AI pipelines with model validation and drift monitoring.

- Maintain segregation of AI models and production data to prevent cross-contamination and adversarial influence.

The new model turns the SOC into a decision-support hub, where humans guide and refine AI rather than manage endless queues of alerts. Security analysts are able to move from tedious monitoring work to more critical activities like validation, red teaming, and scenario testing.

Concurrently, agentic assistants automatically handle low-risk tasks such as log correlation and data enrichment so that human operators can direct their effort to strategic judgment. This human-centered intelligent collaboration between humans and machines enables organizations to extend their defensive networks and capabilities in a way that does not simply scale the team; it multiplies the force with automation and oversight.

The skill set for next-generation cybersecurity goes beyond scripting or incident response. That’s exactly why organizations need professionals who understand both security operations and data science fundamentals.

That means:

- Upskilling analysts in model behavior, bias recognition, and adversarial testing.

- Hiring AI-literate SecOps engineers capable of maintaining and fine-tuning models.

- Creating cross-functional teams.

- Establishing AI governance boards to evaluate risks, ethics, and system transparency

Adopting AI-first security is as much a leadership decision as a technical one. CISOs should approach this transformation with two imperatives:

- Augment human expertise rather than replace it; AI works best when paired with contextual judgment.

- Institutionalize adaptation: Treat every incident as model-training data, every failure as a refinement cycle.

In a future defined by intelligent offense and adaptive defense, organizations that embed AI into their operating DNA will not just survive the next generation of cyber threats; they’ll stay one learning cycle ahead.

It’s time to rethink how your security ecosystem fundamentally works: the architecture that connects data, the teams that make sense of it, and the governance that ties everything back to trust. Artificial intelligence redefines not just what a system can do, but also how fast it can make sense of what’s happening inside it.

Stay protected. Now is the time to start crafting your AI-first cybersecurity roadmap.

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Suspendisse varius enim in eros elementum tristique. Duis cursus, mi quis viverra ornare, eros dolor interdum nulla, ut commodo diam libero vitae erat. Aenean faucibus nibh et justo cursus id rutrum lorem imperdiet. Nunc ut sem vitae risus tristique posuere. uis cursus, mi quis viverra ornare, eros dolor interdum nulla, ut commodo diam libero vitae erat. Aenean faucibus nibh et justo cursus id rutrum lorem imperdiet. Nunc ut sem vitae risus tristique posuere.

Reply