Building with AI starts where autocomplete stops. Using Copilot to speed up boilerplate is helpful, of course, but that is not the same as shipping an AI-native product. Once you move from suggestions in the editor to systems that reason, retrieve context, call tools, and make decisions, the engineering problems change fast.

Source: https://www.creative-elements.ca/what-is-custom-web-design-and-web-development/

Suddenly, AI web development is less about UI polish alone and more about orchestration, latency, evals, fallback logic, and keeping outputs reliable in production. The hard part is not getting an AI agent to “work” in a demo. The hard part is making it useful under real traffic. And we’ll break down what that process actually looks like behind the scenes.

What "AI-Powered" Actually Means in Web Development

A lot of teams say they do AI-powered web development, but they usually mean one of two very different things. The first is AI as a developer tool: Cursor, Copilot, code review assistants, test generation, maybe some help with refactoring or SQL. That improves delivery speed and reduces repetitive work. It plays a bit part in helping engineers move through boilerplate faster.

The second is AI as a product feature. Here, AI is part of the user experience: chat workflows, recommendations, semantic search, personalization, support automation, internal copilots, decision support. At that point, you're going further than accelerating development. You are designing systems around inference, context, tool use, model limits, and unpredictable outputs.

We emphasize this distinction because businesses often budget for one and expect the other. They adopt AI coding tools, ship faster, and assume they are now building an AI product. But faster frontend tickets do not automatically create smarter user journeys. Real AI-powered web development means the application itself can interpret intent and produce value beyond static logic.

Stage 1: Discovery & Architecture

Requirements analysis looks different when AI is involved. A normal web app spec usually defines roles, flows, inputs, outputs, and business rules. An AI feature adds another layer.

- What kind of reasoning is expected?

- What context the model needs?

- What does “good enough” output means?

- When should the system ask follow-up question?

- When it should do nothing at all?

Stakeholders say they want an AI assistant, but under the hood that could mean a simple retrieval chat or a recommendation engine. Those are very different systems with very different failure modes.

AI helps here by accelerating exploration. Teams can use it to turn vague product ideas into structured requirement drafts. It is especially useful for identifying ambiguity in prompts like “make it smarter” or “personalize the experience,” which sound clear in meetings and collapse immediately when you try to model actual system behavior. At this stage, the real question lies in defining boundaries.

Source: https://proit.ua/iaka-konkurientsiia-na-mizhnarodnomu-rinku-software-development-ta-iaki-pierievaghi-maiut-ukrayinski-rozrobniki/

Once AI becomes part of the product, architecture stops being just a technical diagram and starts driving business outcomes. Besides frontend, backend, and database, you now have to decide whether the product needs RAG, vector search, memory, async workers, model routing, caching, prompt observability, and evals that catch regressions before users do. A simple assistant can quickly expand into a multi-service system with an API layer, model provider, embeddings pipeline, document ingestion, permission controls, telemetry, and fallback logic.

Stack selection gets heavier too: React or Next.js on the frontend, Python or Node on the backend, PostgreSQL for core data, then Redis, object storage, queueing, and semantic retrieval layers on top. At that point, model choice is about latency, cost, reliability, and whether the product still makes economic sense in production.

Stage 2: Design & Prototyping

Once discovery is stable, the next bottleneck is usually speed. Not coding speed in isolation, but the time lost between an idea, a screen, a clickable flow, and something the team can actually react to. So many specialists usually recommend Figma AI and v0.dev. They do not replace product designers or frontend engineers, but they remove a lot of low-value iteration work from the loop.

For teams figuring out how to build AI website experiences, rapid prototyping matters a lot because AI features are hard to judge from specs alone. The problem is not visual polish. It is interaction design: where the AI appears, how much context it gets, when it should ask follow-up questions, what the fallback state looks like, and how users recover when the output is wrong.

Source: https://lform.com/blog/post/the-importance-of-wireframing-and-ux-prototyping-for-web-design-and-development/

That is why design tooling saves real time here.

- Figma AI helps generate first-pass layouts, content structure, and component variations fast enough to test multiple ideas before the team overcommits to one direction.

- v0.dev is useful when you want to move from mockup logic to frontend-shaped output without manually wiring every block from scratch. It is especially good for spinning up rough interfaces for dashboards, assistant panels, search pages, and settings flows that engineers can then harden into real code.

The practical advice is simple: prototype the failure paths. Show what happens when the model is slow, uncertain, off-topic, or missing context. Test where human control needs to stay visible. In AI projects, the fastest teams are usually the ones that use design tools to reduce ambiguity before engineering starts.

Stage 3: Development

Once the prototype is clear, the development phase becomes a question of leverage. Cursor and GitHub Copilot are strongest inside the codebase. They speed up scaffold generation, refactors, repetitive API wiring, test boilerplate, schema mapping, typed component creation, and documentation drafts. They are also useful for pattern continuation. Once the repository already has conventions, these tools can extend that structure quickly.

Claude API sits in a different part of the system. It is part of the runtime product layer. Teams use it for long-context reasoning, structured generation, summarization pipelines, classification, workflow assistance, and agent-style interactions where the model has to interpret inputs and produce usable outputs inside the application.

Source: https://revelry.co/development-programming-languages/

Here, senior engineers are much needed. The acceleration is real, but the hard parts remain architectural. A senior developer decides where AI belongs in the execution path, which logic must stay deterministic, how model outputs are validated, how state moves across the system, and how to keep the product observable under load. They define boundaries between LLM-driven behavior and standard backend logic.

In real systems, the implementation usually looks layered. A typical stack might use:

- Next.js for the app layer;

- Python or Node for orchestration services;

- PostgreSQL for transactional state;

- Redis for short-lived context and caching;

- a vector index for retrieval;

- Claude API for reasoning-heavy paths.

Around that, senior developers add tracing, rate limiting, feature flags, eval hooks, and fallback behavior. The fastest workflow is usually this: let AI tools generate the first 60 to 70 percent of implementation, then let senior engineers shape the final system behavior.

Stage 4: Embedding AI Into the Product

The implementation usually comes down to four common layers: AI search, personalization, a RAG-based knowledge base, and AI chat. Each one looks simple in a roadmap deck. In code, each one needs its own procedure.

AI search usually starts with semantic retrieval. A standard setup includes embeddings generation, chunking rules, metadata filtering, vector storage, and a reranking layer before results reach the UI. For production, lexical search is still useful, so most teams ship hybrid retrieval instead of relying on vectors alone. That gives better control over exact-match queries, identifiers, structured fields, and domain-specific terms.

At personalization, the system is adapting outputs based on user role, behavior, history, or session context. That usually means combining transactional data from PostgreSQL, event data from analytics pipelines, feature flags, CRM or product usage signals, and a serving layer that can inject the right context into model calls or ranking logic. Sometimes personalization is LLM-driven, sometimes it is a rules-and-ML hybrid. The important part is keeping context structured.

Source:https://dreamix.eu/insights/top-ai-software-development-companies/

Architecture quality is where a RAG-based knowledge base is most important to consider. Good RAG is not simply “upload PDFs and ask questions.” The ingestion pipeline has to normalize documents, preserve document hierarchy, store metadata, version updates, and support reindexing when source content changes. The chunking strategy affects everything: too small and you lose context, too large and retrieval quality drops. Then comes retrieval orchestration: top-k selection, metadata filters, reranking, prompt assembly, citations, and fallback behavior when recall is weak. Most mature implementations also separate ingestion from serving, so indexing runs asynchronously while query-time services stay fast and predictable.

AI chat sits on top of all of this as the user-facing orchestration layer. A serious implementation is usually stateful, streaming, and tool-aware. The backend needs session memory, message history compression, structured tool calling, schema validation, and routing logic for different intents.

Stage 5: Testing & Deployment

Testing AI in production-grade web systems is closer to validating a distributed runtime. Automated test case generation is useful early because AI features multiply the number of possible inputs fast. Teams use LLMs to draft unit tests, integration cases, edge-case matrices, and scenario-based QA flows from product requirements, API contracts, and user stories. The output still needs review, but it speeds up coverage creation for flows that would otherwise take days to enumerate manually. The best setup is usually hybrid: AI proposes cases, engineers lock them into deterministic test suites.

Visual regression testing becomes more important once the UI includes streaming responses. Standard snapshot testing is not always enough, so teams usually combine component-level regression checks with browser-based end-to-end runs. The goal is to validate that state transitions render correctly across various scenarios.

Deployment adds another layer of engineering discipline. AI services are usually rolled out behind feature flags, environment-based model configs, and staged release strategies. It is common to ship new prompt versions, retrieval logic, or model routes to a small traffic segment first, then compare latency, completion quality, fallback frequency, and conversion behavior before widening rollout.

Post-deployment monitoring is where the system becomes maintainable. Standard observability still play a big role. But AI features also need application-specific telemetry: token usage, retrieval hit quality, hallucination indicators, tool-call success rate, output schema validity, abandonment after AI response, and human override frequency.

The strongest QA pipelines treat AI like a living subsystem. That’s for one. And secondly, human input is more valuable than ever.

A Real Project Example

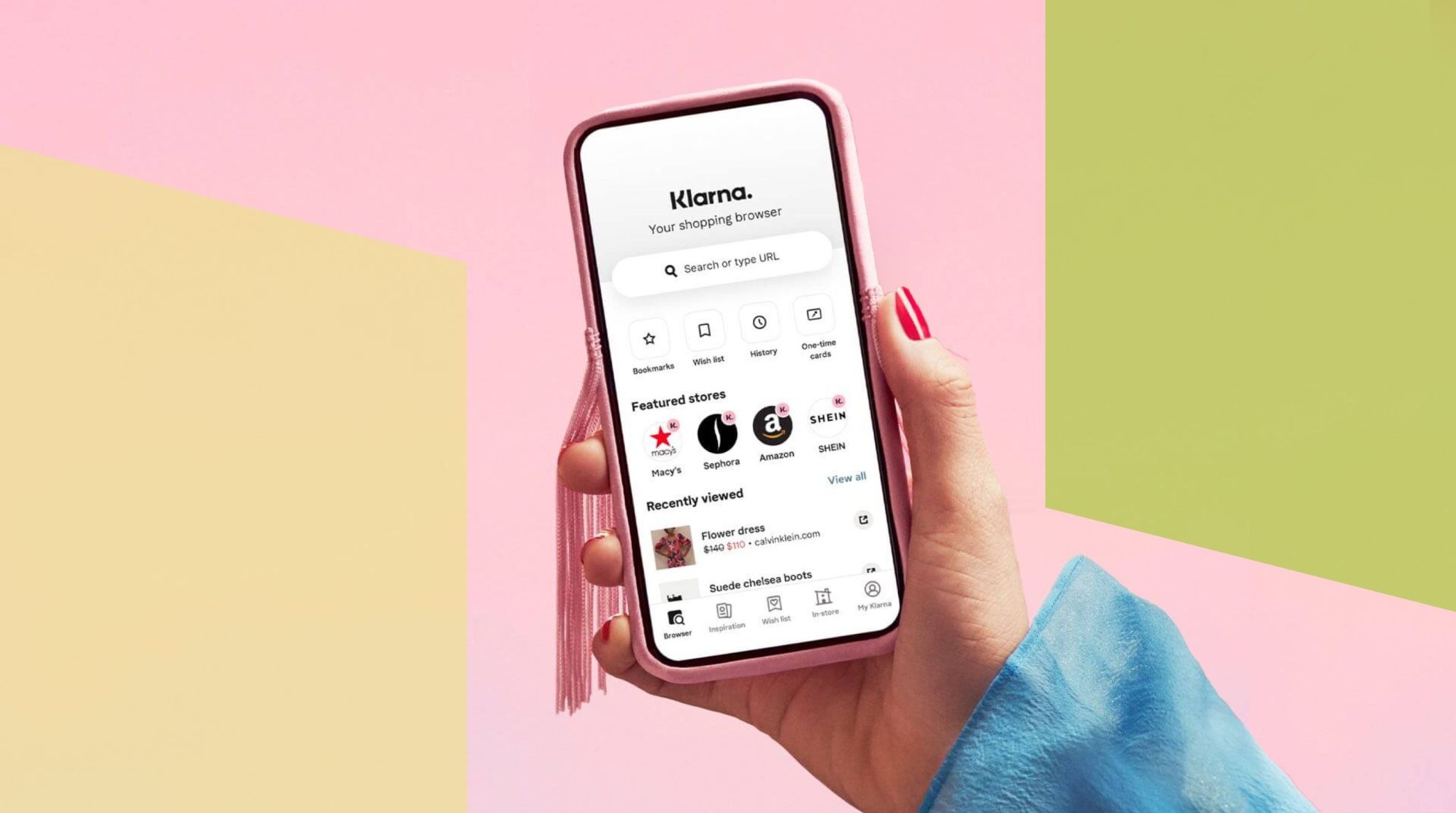

There’s a good public example with Klarna. The company didn’t keep AI as a side widget. It added an AI assistant directly in the customer experience layer to process support interactions at scale and handle large support flows of customers’ high number of interactions.

Source: https://fintechinsider.com.ua/klarna-zapuskaye-funkcziyi-rahunku-ta-keshbeku-za-pokupky-v-zastosunku/

The challenge was simple: support volume was increasing, users wanted answers to be quicker, and legacy workflows were tougher to scale across markets economically. The implementation centered around an AI assistant built into Klarna’s service flow, built to quickly handle frequent requests while also working in a variety of languages and regions.

This is the rollout that gives us insights into what an AI web development company should be seeking after to optimize, that is, the production traffic, response velocity or speed, orchestration for a responsive response, and measurable business value instead of demo novelty.

The results were significant. Klarna said the assistant had handled 2.3 million conversations in its first month (two-thirds of all customer service chats). It provided equivalent work to 700 full-time agents, decreased average resolution time from 11 minutes to below 2, and helped spur a 25% decrease in repeat inquiries. The system also rolled out across 23 markets and was able to support over 35 languages, proving what AI integration looks like when positioned as part of a core product strategy.

Is This Approach Right for Your Project?

The honest answer is that AI is justified only when it changes a business outcome. The key question is simple: Does AI reduce cost, increase conversion, shorten time-to-value, or improve decision quality in a way standard software logic cannot? If the answer is yes, the investment makes sense. If not, adding AI usually creates more complexity than value.

AI is a strong fit when the product has large volumes of unstructured data, repetitive user queries, recommendation opportunities, workflow bottlenecks, or decisions that benefit from context instead of fixed rules. It also makes sense when the business needs a more adaptive experience. In those cases, AI can improve operational efficiency and create a better user experience at the same time.

But AI is often overkill when the workflow is already deterministic. If a standard dashboard, search filter, rules engine, or well-designed form can solve the problem, that is usually the better business decision.

The best projects usually start with one narrow, measurable use case. “We need to reduce support load by 30%,” or “we need users to find the right content in half the time,” or “we need to increase conversion in high-intent flows.” That framing builds trust internally because it keeps the discussion tied to ROI, delivery risk, and real operational value. In other words, AI is the right approach when it solves a business problem better than conventional software. Anything less is usually just expensive positioning.

If you are evaluating where AI can create real operational or product value, this is the stage where a technical conversation matters most. The right implementation can improve user experience, automate high-volume workflows, and open up new product capabilities without turning the platform into an expensive experiment. And you can get all the data you need with a good dev partner.

Ready to build your AI-powered product? Let’s talk.

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Suspendisse varius enim in eros elementum tristique. Duis cursus, mi quis viverra ornare, eros dolor interdum nulla, ut commodo diam libero vitae erat. Aenean faucibus nibh et justo cursus id rutrum lorem imperdiet. Nunc ut sem vitae risus tristique posuere. uis cursus, mi quis viverra ornare, eros dolor interdum nulla, ut commodo diam libero vitae erat. Aenean faucibus nibh et justo cursus id rutrum lorem imperdiet. Nunc ut sem vitae risus tristique posuere.

Reply